langflow

Langflow is a powerful tool for building and deploying AI-powered agents and workflows.

Langflow is a powerful tool for building and deploying AI-powered agents and workflows.

ToolJet is the open-source foundation of ToolJet AI - the AI-native platform for building internal tools, dashboard, business applications, workflows and AI agents 🚀

Google Workspace CLI — one command-line tool for Drive, Gmail, Calendar, Sheets, Docs, Chat, Admin, and more. Dynamically built from Google Discovery Service. Includes AI agent skills.

npm install -g @googleworkspace/cli

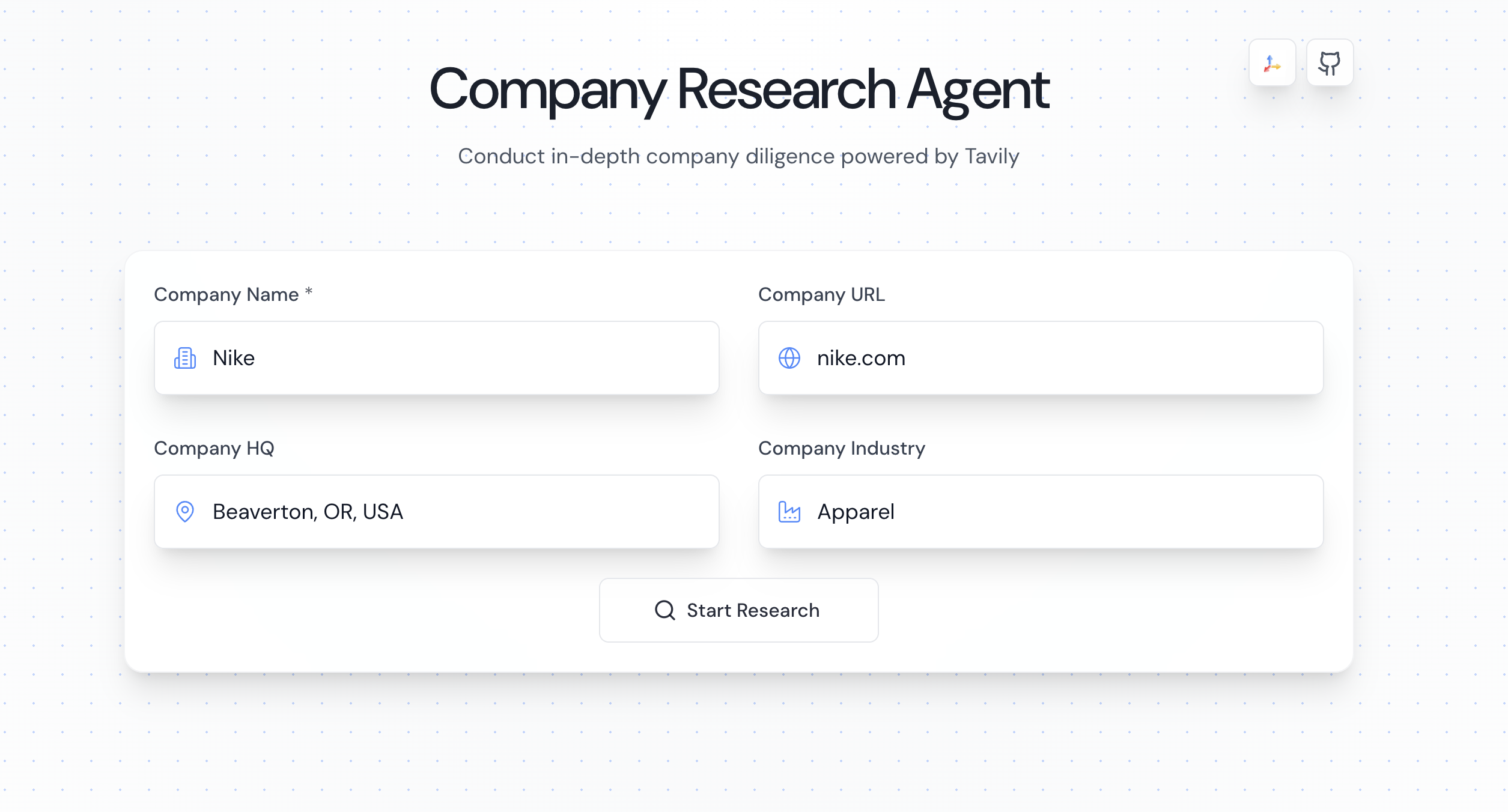

A multi-agent tool that generates comprehensive company research reports. The platform uses a pipeline of AI agents to gather, curate, and synthesize information about any company.

✨Check it out online! https://companyresearcher.tavily.com ✨

https://github.com/user-attachments/assets/0e373146-26a7-4391-b973-224ded3182a9

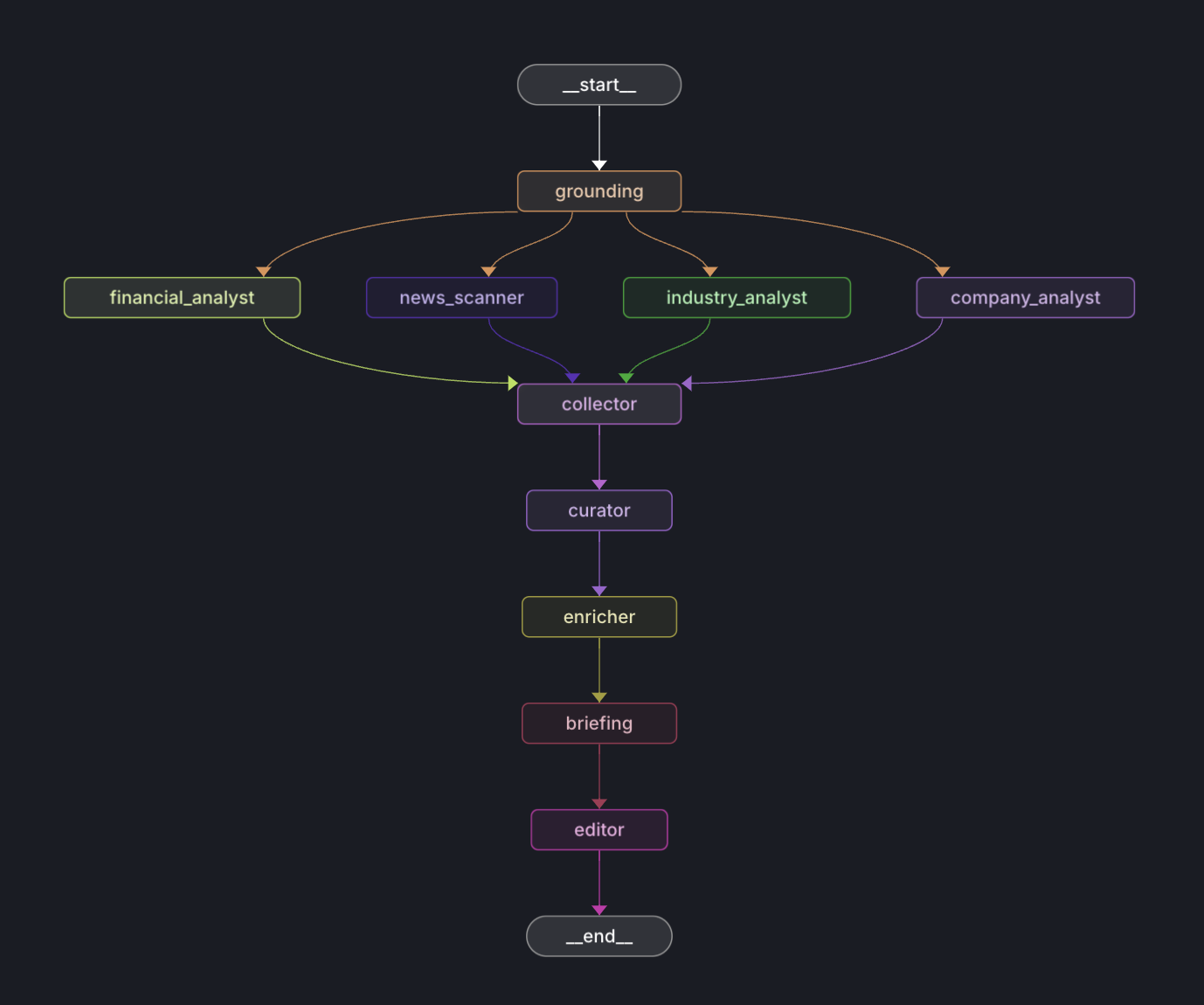

The platform follows an agentic framework with specialized nodes that process data sequentially:

Research Nodes:

CompanyAnalyzer: Researches core business informationIndustryAnalyzer: Analyzes market position and trendsFinancialAnalyst: Gathers financial metrics and performance dataNewsScanner: Collects recent news and developmentsProcessing Nodes:

Collector: Aggregates research data from all analyzersCurator: Implements content filtering and relevance scoringBriefing: Generates category-specific summaries using Gemini 2.5 FlashEditor: Compiles and formats the briefings into a final report using GPT-5.1

The platform leverages separate models for optimal performance:

Gemini 2.5 Flash (briefing.py):

GPT-5.1 (editor.py):

This approach combines Gemini's strength in handling large context windows with GPT-5.1's precision in following specific formatting instructions.

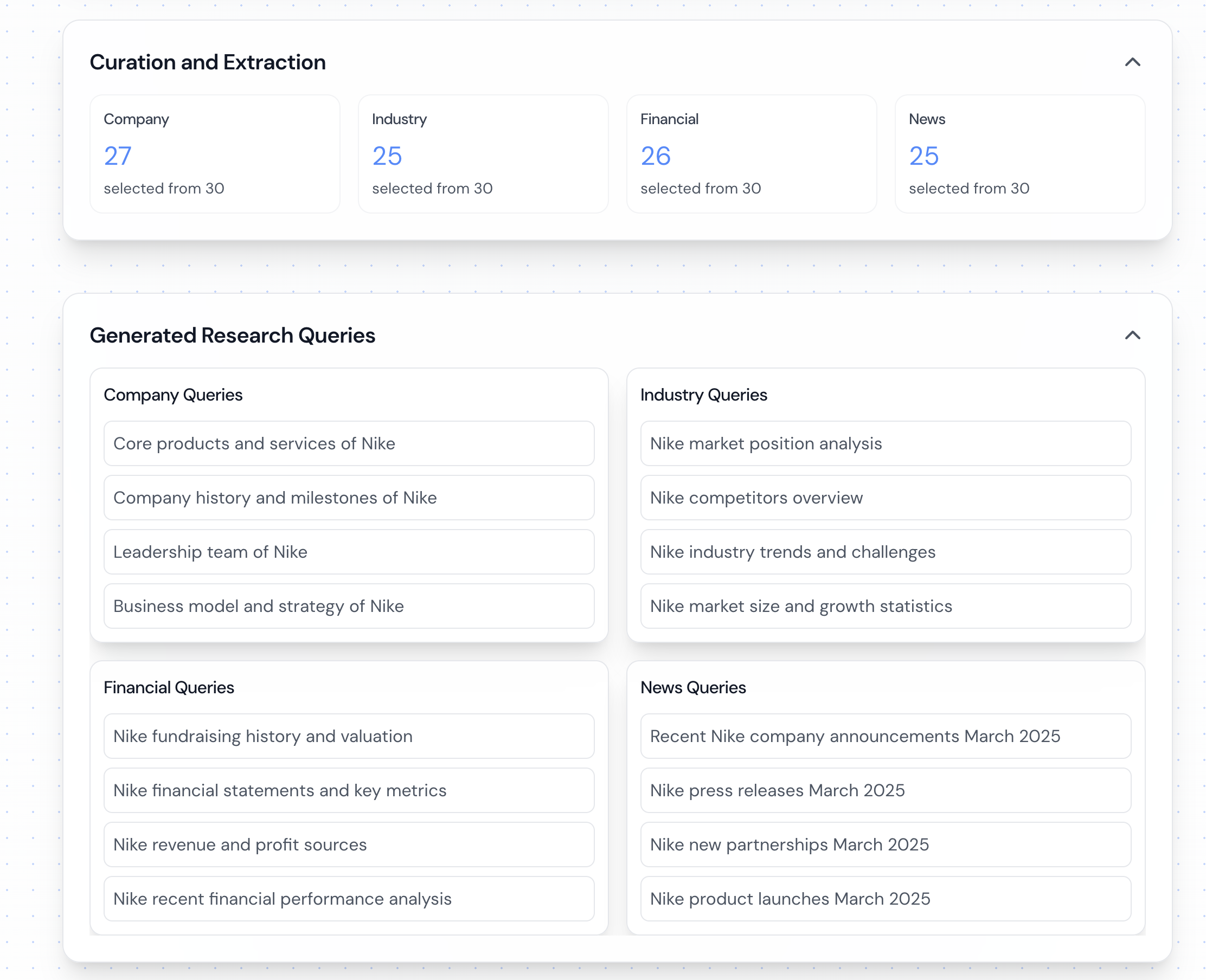

The platform uses a content filtering system in curator.py:

Relevance Scoring:

Document Processing:

The platform implements a simple polling-based communication system:

Backend Implementation:

Frontend Integration:

/research/{job_id}/report endpointAPI Endpoints:

POST /research: Submit new research requestGET /research/{job_id}/report: Poll for completed reportPOST /generate-pdf: Generate PDF from report contentThe easiest way to get started is using the setup script, which automatically detects and uses uv for faster Python package installation when available:

git clone https://github.com/guy-hartstein/tavily-company-research.git

cd tavily-company-research

chmod +x setup.sh

./setup.sh

The setup script will:

uv for faster Python package installation (if available)💡 Pro Tip: Install uv for significantly faster Python package installation:

curl -LsSf https://astral.sh/uv/install.sh | sh

You'll need the following API keys ready:

If you prefer to set up manually, follow these steps:

git clone https://github.com/guy-hartstein/tavily-company-research.git

cd tavily-company-research

# Optional: Create and activate virtual environment

# With uv (faster - recommended if available):

uv venv .venv

source .venv/bin/activate

# Or with standard Python:

# python -m venv .venv

# source .venv/bin/activate

# Install Python dependencies

# With uv (faster):

uv pip install -r requirements.txt

# Or with pip:

# pip install -r requirements.txt

cd ui

npm install

This project requires two separate .env files for the backend and frontend.

For the Backend:

Create a .env file in the project's root directory and add your backend API keys:

TAVILY_API_KEY=your_tavily_key

GEMINI_API_KEY=your_gemini_key

OPENAI_API_KEY=your_openai_key

# Optional: Enable MongoDB persistence

# MONGODB_URI=your_mongodb_connection_string

For the Frontend:

Create a .env file inside the ui directory. You can copy the example file first:

cp ui/.env.development.example ui/.env

Then, open ui/.env and add your frontend environment variables:

VITE_API_URL=http://localhost:8000

VITE_GOOGLE_MAPS_API_KEY=your_google_maps_api_key_here

The application can be run using Docker and Docker Compose:

git clone https://github.com/guy-hartstein/tavily-company-research.git

cd tavily-company-research

The Docker setup uses two separate .env files.

For the Backend:

Create a .env file in the project's root directory with your backend API keys:

TAVILY_API_KEY=your_tavily_key

GEMINI_API_KEY=your_gemini_key

OPENAI_API_KEY=your_openai_key

# Optional: Enable MongoDB persistence

# MONGODB_URI=your_mongodb_connection_string

For the Frontend:

Create a .env file inside the ui directory. You can copy the example file first:

cp ui/.env.development.example ui/.env

Then, open ui/.env and add your frontend environment variables:

VITE_API_URL=http://localhost:8000

VITE_GOOGLE_MAPS_API_KEY=your_google_maps_api_key_here

docker compose up --build

This will start both the backend and frontend services:

http://localhost:8000http://localhost:5174To stop the services:

docker compose down

Note: When updating environment variables in .env, you'll need to restart the containers:

docker compose down && docker compose up

# Option 1: Direct Python Module

python -m application.py

# Option 2: FastAPI with Uvicorn

uvicorn application:app --reload --port 8000

cd ui

npm run dev

http://localhost:5173Start the backend server (choose one option):

Option 1: Direct Python Module

python -m application.py

Option 2: FastAPI with Uvicorn

# Install uvicorn if not already installed

# With uv (faster):

uv pip install uvicorn

# Or with pip:

# pip install uvicorn

# Run the FastAPI application with hot reload

uvicorn application:app --reload --port 8000

The backend will be available at:

http://localhost:8000Start the frontend development server:

cd ui

npm run dev

Access the application at http://localhost:5173

⚡ Performance Note: If you used

uvduring setup, you'll benefit from significantly faster package installation and dependency resolution.uvis a modern Python package manager written in Rust that can be 10-100x faster than pip.

The application can be deployed to various cloud platforms. Here are some common options:

Install the EB CLI:

pip install awsebcli

Initialize EB application:

eb init -p python-3.11 tavily-research

Create and deploy:

eb create tavily-research-prod

Choose the platform that best suits your needs. The application is platform-agnostic and can be hosted anywhere that supports Python web applications.

git checkout -b feature/amazing-feature)git commit -m 'Add amazing feature')git push origin feature/amazing-feature)This project is licensed under the MIT License - see the LICENSE file for details.